Ph.D. Thesis

Dissertation title: «Nonlinear conjugate gradient methods for optimization and neural network training» (in Greek).

Supervisor: Professor P. Pintelas, Department of Mathematics, University of Patras.

Dissertation title: «Nonlinear conjugate gradient methods for optimization and neural network training» (in Greek).

Supervisor: Professor P. Pintelas, Department of Mathematics, University of Patras.

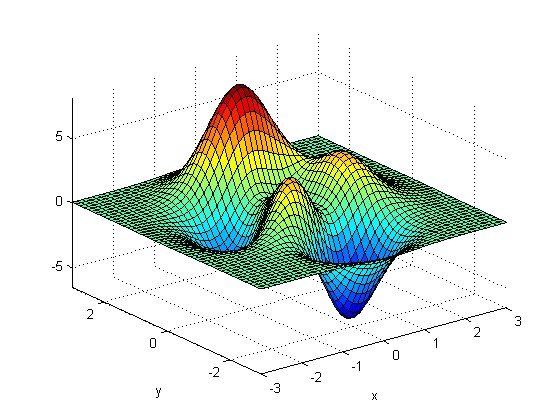

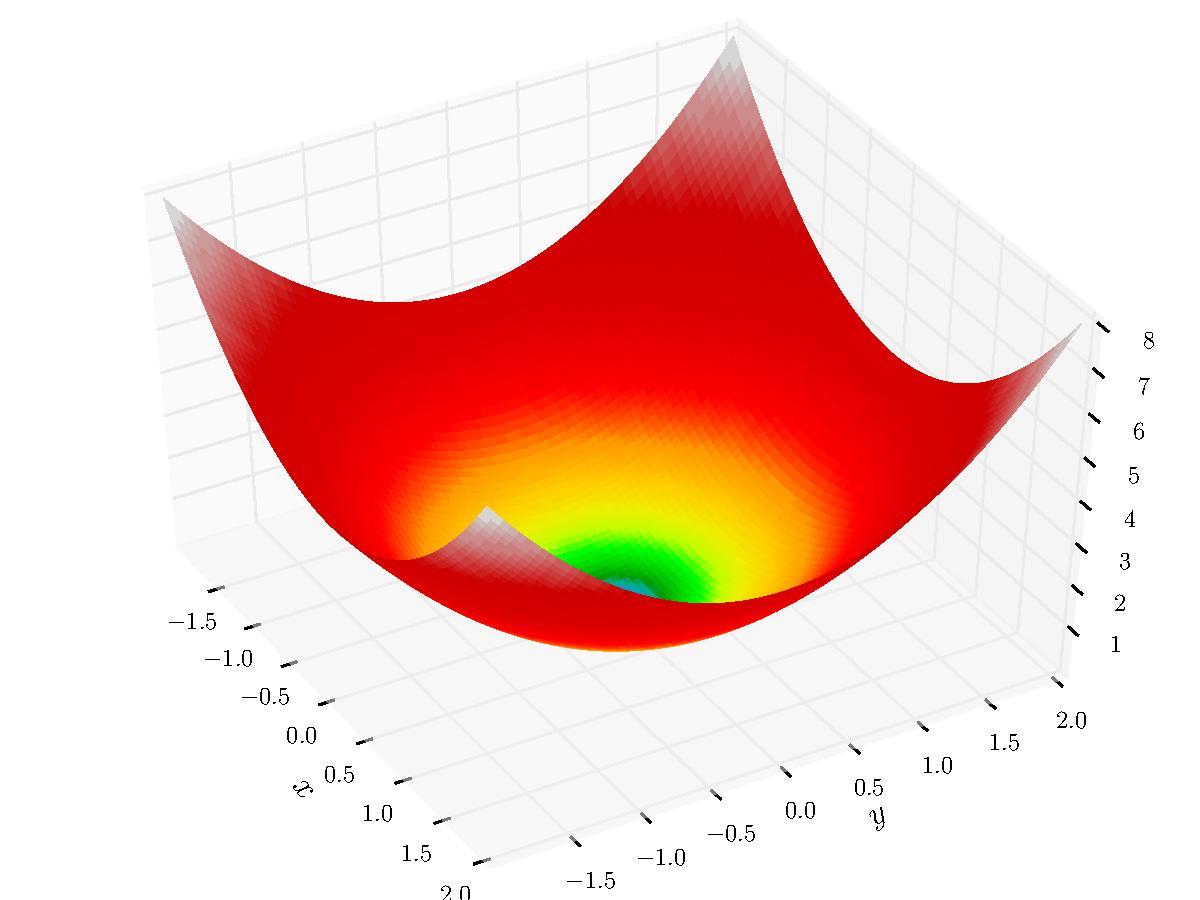

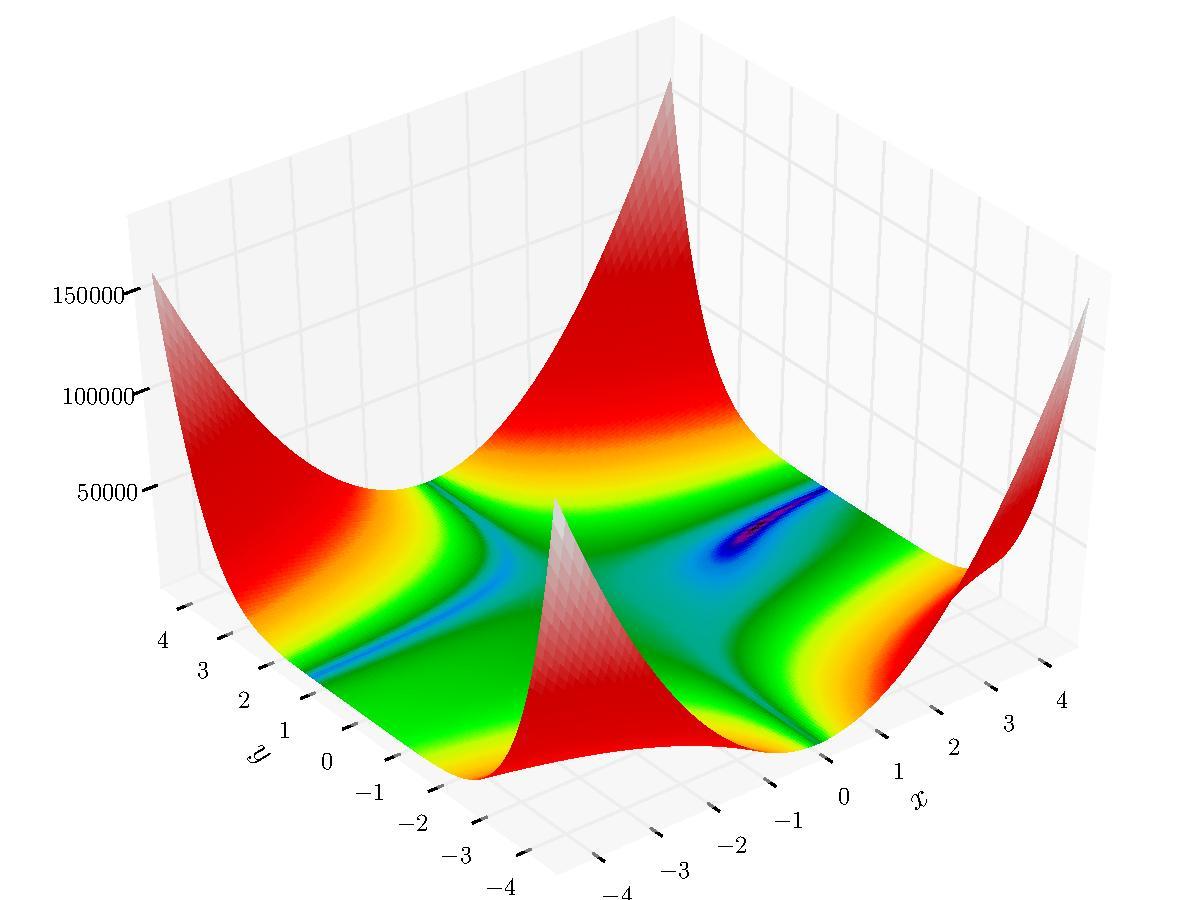

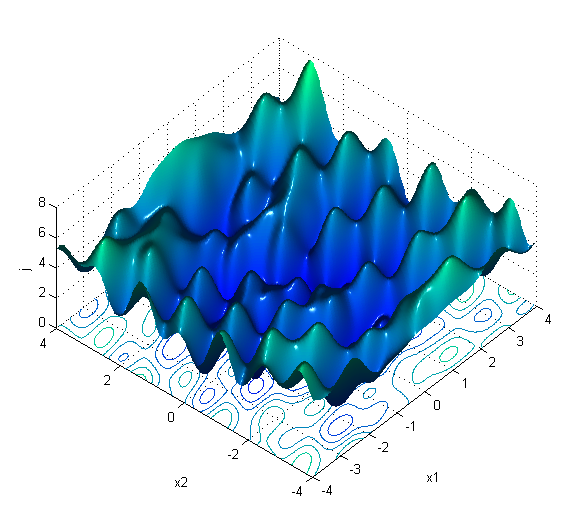

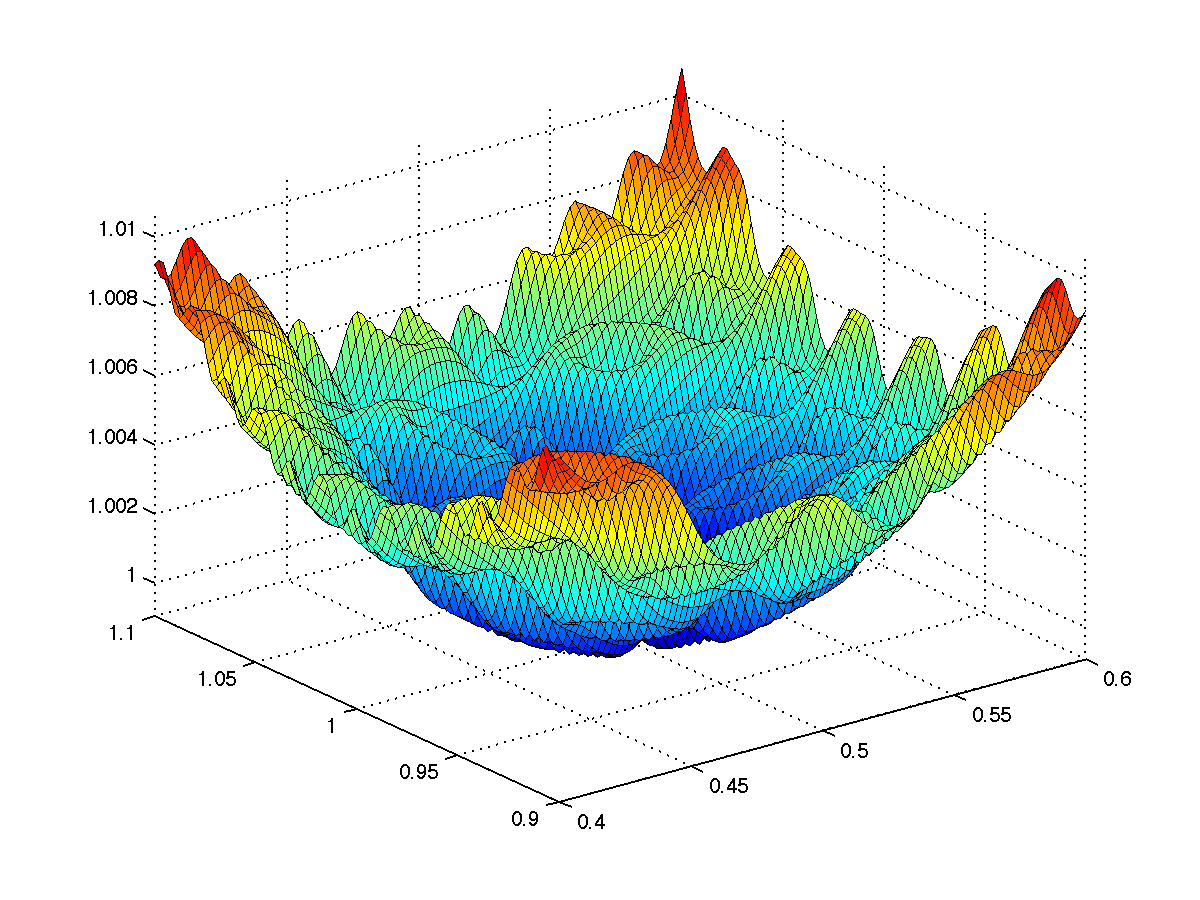

Abstract: The contribution of this thesis focuses on the development and the mathematical foundation of new conjugate gradient methods for unconstrained optimization and on the study of new neural network training methods and their applications. We propose two new conjugate gradient methods for unconstrained optimization. The proposed methods are based on new secant equations with strong theoretical advantages i.e. they approximate the surface of the objective function with higher accuracy. Moreover, they have the attractive property of ensuring sufficient descent independent of the accuracy of the line search, avoiding thereby the usual inefficient restarts. Further, we have established the global convergence of the proposed methods for general functions under mild conditions. Based on our numerical results we conclude that our proposed methods outperform classical conjugate gradient methods in both efficiency and robustness. The second part of the thesis is devoted on the study and development of new neural network training algorithms. More specifically, we propose some new training methods which preserve the advantages of classical conjugate gradient methods while simultaneously ensure sufficient descent using any line search, avoiding thereby the usual inefficient restarts. Moreover, we have established the global convergence of our proposed methods for general functions. Encouraging numerical experiments on famous benchmarks verify that the presented methods provide fast, stable and reliable convergence, outperforming classical training methods. Finally, the presentation of the research work of this dissertation is fulfilled with the presentation of a new curvilinear algorithm for training large neural networks which is based on the analysis of the eigenstructure of the memoryless BFGS matrices. The proposed method preserves the strong convergence properties provided by the quasiNewton direction while simultaneously it exploits the nonconvexity of the error surface through the computation of the negative curvature direction without using any storage and matrix factorization. Our numerical experiments have shown that the proposed method outperforms other popular training methods on famous benchmarks. Furthermore, for improving the generalization capability of trained neural networks, we explore the incorporation of several dimensionality reduction techniques as a preprocessing step. To this end, we have experimentally evaluated the application of dimensional reduction techniques for increasing the generalization capability of neural network in large biomedical datasets.